bp神经网络应用gui界面代码(bp神经网络 代码)

admin 发布:2022-12-19 10:47 121

本篇文章给大家谈谈bp神经网络应用gui界面代码,以及bp神经网络 代码对应的知识点,希望对各位有所帮助,不要忘了收藏本站喔。

本文目录一览:

BP神经网络matlab源程序代码讲解

newff 创建前向BP网络格式:

net = newff(PR,[S1 S2...SNl],{TF1 TF2...TFNl},BTF,BLF,PF)

其中:PR —— R维输入元素的R×2阶最大最小值矩阵; Si —— 第i层神经元的个数,共N1层; TFi——第i层的转移函数,默认‘tansig’; BTF—— BP网络的训练函数,默认‘trainlm’; BLF—— BP权值/偏差学习函数,默认’learngdm’ PF ——性能函数,默认‘mse’;(误差)

e.g.

P = [0 1 2 3 4 5 6 7 8 9 10];T = [0 1 2 3 4 3 2 1 2 3 4];

net = newff([0 10],[5 1],{'tansig' 'purelin'});net.trainparam.show=50; %每次循环50次net.trainParam.epochs = 500; %最大循环500次

net.trainparam.goal=0.01; %期望目标误差最小值

net = train(net,P,T); %对网络进行反复训练

Y = sim(net,P)Figure % 打开另外一个图形窗口

plot(P,T,P,Y,'o')

BP神经网络预测代码

你这是在做时间序列呢。

你可以去《神经网络之家》nnetinfo----》学习教程二---神经网络在时间序列上的应用

上面有讲解。我把代码摘抄给你

% time series:神经网络在时间序列上的应用

% 本代码出自《神经网络之家》

timeList = 0 :0.01 : 2*pi; %生成时间点

X = sin(timeList); %生成时间序列信号

%利用x(t-5),x(t-4),x(t-3),x(t-2),x(t-1)作为输入预测x(t),将x(t)作为输出数据

inputData = [X(1:end-5);X(2:end-4);X(3:end-3);X(4:end-2);X(5:end-1)];

outputData = X(6:end);

%使用用输入输出数据(inputData、outputData)建立网络,

%隐节点个数设为3.其中隐层、输出层的传递函数分别为tansig和purelin,使用trainlm方法训练。

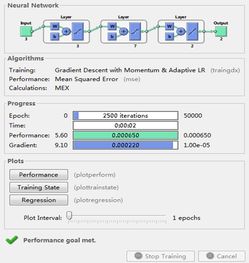

net = newff(inputData,outputData,3,{'tansig','purelin'},'trainlm');

%设置一些常用参数

net.trainparam.goal = 0.0001; %训练目标:均方误差低于0.0001

net.trainparam.show = 400; %每训练400次展示一次结果

net.trainparam.epochs = 1500; %最大训练次数:15000.

[net,tr] = train(net,inputData,outputData);%调用matlab神经网络工具箱自带的train函数训练网络

simout = sim(net,inputData); %调用matlab神经网络工具箱自带的sim函数得到网络的预测值

figure; %新建画图窗口窗口

t=1:length(simout);

plot(t,outputData,t,simout,'r')%画图,对比原来的输出和网络预测的输出

%------------------附加:抽取数学表达式----------------------------top

%希望脱离matlab的sim函数来使用训练好网络的话,可以抽取出数学的表达式,|

%这样在任何软件中,只需要按表达式计算即可。 |

%============抽取数学表达式==================

%抽取出网络的权值和阈值

w12 = net.iw{1,1}; %第1层(输入层)到第2层(隐层)的权值

b2 = net.b{1}; %第2层(隐层)的阈值

w23 = net.lw{2,1}; %第2层(隐层)到第3层(输出层)的权值

b3 = net.b{2}; %第3层(输出层)的阈值

%由于有归一化,必须先将归一化信息抓取出来

iMax = max(inputData,[],2);

iMin = min(inputData,[],2);

oMax = max(outputData,[],2);

oMin = min(outputData,[],2);

%方法1:归一化---计算输出---反归一化

normInputData=2*(inputData -repmat(iMin,1,size(inputData,2)))./repmat(iMax-iMin,1,size(inputData,2)) -1;

tmp = w23*tansig( w12 *normInputData + repmat(b2,1,size(normInputData,2))) + repmat(b3,1,size(normInputData,2));

myY = (tmp+1).*repmat(oMax-oMin,1,size(outputData,2))./2 + repmat(oMin,1,size(outputData,2));

%方法2:用真正的权值和阈值进行计算

%公式请参考《提取对应原始数据的权重和阈值》

W12 = w12 * 2 ./repmat(iMax' -iMin',size(w12,1),1);

B2 = -w12* (2*iMin ./(iMax - iMin) + 1) + b2;

W23 = w23 .*repmat((oMax -oMin),1,size(w23,2))/2;

B3 = (oMax -oMin) .*b3 /2 + (oMax -oMin)/2 + oMin;

%最终的数学表达式:

myY2 = W23 *tansig( W12 *inputData + repmat(B2,1,size(inputData,2))) + repmat(B3,1,size(inputData,2));

matlab BP神经网络预测代码

P=[1;2;3;4;5];%月

P=[P/50];

T=[2;3;4;5;6];%月训练样本

T=[T/50];

threshold=[0 1;0 1;0 1;0 1;0 1;0 1;0 1];

net=newff(threshold,[15,7],{'tansig','logsig'},'trainlm');

net.trainParam.epochs=2000;

net.trainParam.goal=0.001;

LP.lr=0.1;

net=train(net,P,T);

P_test=[6月]';%6月数据预测7月

P_test=[P_test/50];

y=sim(net,P_test)

y=[y*50]

求BP神经网络算法的C++源代码

// AnnBP.cpp: implementation of the CAnnBP class.

//

//////////////////////////////////////////////////////////////////////

#include "StdAfx.h"

#include "AnnBP.h"

#include "math.h"

//////////////////////////////////////////////////////////////////////

// Construction/Destruction

//////////////////////////////////////////////////////////////////////

CAnnBP::CAnnBP()

{

eta1=0.3;

momentum1=0.3;

}

CAnnBP::~CAnnBP()

{

}

double CAnnBP::drnd()

{

return ((double) rand() / (double) BIGRND);

}

/*** 返回-1.0到1.0之间的双精度随机数 ***/

double CAnnBP::dpn1()

{

return (double) (rand())/(32767/2)-1;

}

/*** 作用函数,目前是S型函数 ***/

double CAnnBP::squash(double x)

{

return (1.0 / (1.0 + exp(-x)));

}

/*** 申请1维双精度实数数组 ***/

double* CAnnBP::alloc_1d_dbl(int n)

{

double *new1;

new1 = (double *) malloc ((unsigned) (n * sizeof (double)));

if (new1 == NULL) {

AfxMessageBox("ALLOC_1D_DBL: Couldn't allocate array of doubles\n");

return (NULL);

}

return (new1);

}

/*** 申请2维双精度实数数组 ***/

double** CAnnBP::alloc_2d_dbl(int m, int n)

{

int i;

double **new1;

new1 = (double **) malloc ((unsigned) (m * sizeof (double *)));

if (new1 == NULL) {

AfxMessageBox("ALLOC_2D_DBL: Couldn't allocate array of dbl ptrs\n");

return (NULL);

}

for (i = 0; i m; i++) {

new1[i] = alloc_1d_dbl(n);

}

return (new1);

}

/*** 随机初始化权值 ***/

void CAnnBP::bpnn_randomize_weights(double **w, int m, int n)

{

int i, j;

for (i = 0; i = m; i++) {

for (j = 0; j = n; j++) {

w[i][j] = dpn1();

}

}

}

/*** 0初始化权值 ***/

void CAnnBP::bpnn_zero_weights(double **w, int m, int n)

{

int i, j;

for (i = 0; i = m; i++) {

for (j = 0; j = n; j++) {

w[i][j] = 0.0;

}

}

}

/*** 设置随机数种子 ***/

void CAnnBP::bpnn_initialize(int seed)

{

CString msg,s;

msg="Random number generator seed:";

s.Format("%d",seed);

AfxMessageBox(msg+s);

srand(seed);

}

/*** 创建BP网络 ***/

BPNN* CAnnBP::bpnn_internal_create(int n_in, int n_hidden, int n_out)

{

BPNN *newnet;

newnet = (BPNN *) malloc (sizeof (BPNN));

if (newnet == NULL) {

printf("BPNN_CREATE: Couldn't allocate neural network\n");

return (NULL);

}

newnet-input_n = n_in;

newnet-hidden_n = n_hidden;

newnet-output_n = n_out;

newnet-input_units = alloc_1d_dbl(n_in + 1);

newnet-hidden_units = alloc_1d_dbl(n_hidden + 1);

newnet-output_units = alloc_1d_dbl(n_out + 1);

newnet-hidden_delta = alloc_1d_dbl(n_hidden + 1);

newnet-output_delta = alloc_1d_dbl(n_out + 1);

newnet-target = alloc_1d_dbl(n_out + 1);

newnet-input_weights = alloc_2d_dbl(n_in + 1, n_hidden + 1);

newnet-hidden_weights = alloc_2d_dbl(n_hidden + 1, n_out + 1);

newnet-input_prev_weights = alloc_2d_dbl(n_in + 1, n_hidden + 1);

newnet-hidden_prev_weights = alloc_2d_dbl(n_hidden + 1, n_out + 1);

return (newnet);

}

/* 释放BP网络所占地内存空间 */

void CAnnBP::bpnn_free(BPNN *net)

{

int n1, n2, i;

n1 = net-input_n;

n2 = net-hidden_n;

free((char *) net-input_units);

free((char *) net-hidden_units);

free((char *) net-output_units);

free((char *) net-hidden_delta);

free((char *) net-output_delta);

free((char *) net-target);

for (i = 0; i = n1; i++) {

free((char *) net-input_weights[i]);

free((char *) net-input_prev_weights[i]);

}

free((char *) net-input_weights);

free((char *) net-input_prev_weights);

for (i = 0; i = n2; i++) {

free((char *) net-hidden_weights[i]);

free((char *) net-hidden_prev_weights[i]);

}

free((char *) net-hidden_weights);

free((char *) net-hidden_prev_weights);

free((char *) net);

}

/*** 创建一个BP网络,并初始化权值***/

BPNN* CAnnBP::bpnn_create(int n_in, int n_hidden, int n_out)

{

BPNN *newnet;

newnet = bpnn_internal_create(n_in, n_hidden, n_out);

#ifdef INITZERO

bpnn_zero_weights(newnet-input_weights, n_in, n_hidden);

#else

bpnn_randomize_weights(newnet-input_weights, n_in, n_hidden);

#endif

bpnn_randomize_weights(newnet-hidden_weights, n_hidden, n_out);

bpnn_zero_weights(newnet-input_prev_weights, n_in, n_hidden);

bpnn_zero_weights(newnet-hidden_prev_weights, n_hidden, n_out);

return (newnet);

}

void CAnnBP::bpnn_layerforward(double *l1, double *l2, double **conn, int n1, int n2)

{

double sum;

int j, k;

/*** 设置阈值 ***/

l1[0] = 1.0;

/*** 对于第二层的每个神经元 ***/

for (j = 1; j = n2; j++) {

/*** 计算输入的加权总和 ***/

sum = 0.0;

for (k = 0; k = n1; k++) {

sum += conn[k][j] * l1[k];

}

l2[j] = squash(sum);

}

}

/* 输出误差 */

void CAnnBP::bpnn_output_error(double *delta, double *target, double *output, int nj, double *err)

{

int j;

double o, t, errsum;

errsum = 0.0;

for (j = 1; j = nj; j++) {

o = output[j];

t = target[j];

delta[j] = o * (1.0 - o) * (t - o);

errsum += ABS(delta[j]);

}

*err = errsum;

}

/* 隐含层误差 */

void CAnnBP::bpnn_hidden_error(double *delta_h, int nh, double *delta_o, int no, double **who, double *hidden, double *err)

{

int j, k;

double h, sum, errsum;

errsum = 0.0;

for (j = 1; j = nh; j++) {

h = hidden[j];

sum = 0.0;

for (k = 1; k = no; k++) {

sum += delta_o[k] * who[j][k];

}

delta_h[j] = h * (1.0 - h) * sum;

errsum += ABS(delta_h[j]);

}

*err = errsum;

}

/* 调整权值 */

void CAnnBP::bpnn_adjust_weights(double *delta, int ndelta, double *ly, int nly, double **w, double **oldw, double eta, double momentum)

{

double new_dw;

int k, j;

ly[0] = 1.0;

for (j = 1; j = ndelta; j++) {

for (k = 0; k = nly; k++) {

new_dw = ((eta * delta[j] * ly[k]) + (momentum * oldw[k][j]));

w[k][j] += new_dw;

oldw[k][j] = new_dw;

}

}

}

/* 进行前向运算 */

void CAnnBP::bpnn_feedforward(BPNN *net)

{

int in, hid, out;

in = net-input_n;

hid = net-hidden_n;

out = net-output_n;

/*** Feed forward input activations. ***/

bpnn_layerforward(net-input_units, net-hidden_units,

net-input_weights, in, hid);

bpnn_layerforward(net-hidden_units, net-output_units,

net-hidden_weights, hid, out);

}

/* 训练BP网络 */

void CAnnBP::bpnn_train(BPNN *net, double eta, double momentum, double *eo, double *eh)

{

int in, hid, out;

double out_err, hid_err;

in = net-input_n;

hid = net-hidden_n;

out = net-output_n;

/*** 前向输入激活 ***/

bpnn_layerforward(net-input_units, net-hidden_units,

net-input_weights, in, hid);

bpnn_layerforward(net-hidden_units, net-output_units,

net-hidden_weights, hid, out);

/*** 计算隐含层和输出层误差 ***/

bpnn_output_error(net-output_delta, net-target, net-output_units,

out, out_err);

bpnn_hidden_error(net-hidden_delta, hid, net-output_delta, out,

net-hidden_weights, net-hidden_units, hid_err);

*eo = out_err;

*eh = hid_err;

/*** 调整输入层和隐含层权值 ***/

bpnn_adjust_weights(net-output_delta, out, net-hidden_units, hid,

net-hidden_weights, net-hidden_prev_weights, eta, momentum);

bpnn_adjust_weights(net-hidden_delta, hid, net-input_units, in,

net-input_weights, net-input_prev_weights, eta, momentum);

}

/* 保存BP网络 */

void CAnnBP::bpnn_save(BPNN *net, char *filename)

{

CFile file;

char *mem;

int n1, n2, n3, i, j, memcnt;

double dvalue, **w;

n1 = net-input_n; n2 = net-hidden_n; n3 = net-output_n;

printf("Saving %dx%dx%d network to '%s'\n", n1, n2, n3, filename);

try

{

file.Open(filename,CFile::modeWrite|CFile::modeCreate|CFile::modeNoTruncate);

}

catch(CFileException* e)

{

e-ReportError();

e-Delete();

}

file.Write(n1,sizeof(int));

file.Write(n2,sizeof(int));

file.Write(n3,sizeof(int));

memcnt = 0;

w = net-input_weights;

mem = (char *) malloc ((unsigned) ((n1+1) * (n2+1) * sizeof(double)));

// mem = (char *) malloc (((n1+1) * (n2+1) * sizeof(double)));

for (i = 0; i = n1; i++) {

for (j = 0; j = n2; j++) {

dvalue = w[i][j];

//fastcopy(mem[memcnt], dvalue, sizeof(double));

fastcopy(mem[memcnt], dvalue, sizeof(double));

memcnt += sizeof(double);

}

}

file.Write(mem,sizeof(double)*(n1+1)*(n2+1));

free(mem);

memcnt = 0;

w = net-hidden_weights;

mem = (char *) malloc ((unsigned) ((n2+1) * (n3+1) * sizeof(double)));

// mem = (char *) malloc (((n2+1) * (n3+1) * sizeof(double)));

for (i = 0; i = n2; i++) {

for (j = 0; j = n3; j++) {

dvalue = w[i][j];

fastcopy(mem[memcnt], dvalue, sizeof(double));

// fastcopy(mem[memcnt], dvalue, sizeof(double));

memcnt += sizeof(double);

}

}

file.Write(mem, (n2+1) * (n3+1) * sizeof(double));

// free(mem);

file.Close();

return;

}

/* 从文件中读取BP网络 */

BPNN* CAnnBP::bpnn_read(char *filename)

{

char *mem;

BPNN *new1;

int n1, n2, n3, i, j, memcnt;

CFile file;

try

{

file.Open(filename,CFile::modeRead|CFile::modeCreate|CFile::modeNoTruncate);

}

catch(CFileException* e)

{

e-ReportError();

e-Delete();

}

// printf("Reading '%s'\n", filename);// fflush(stdout);

file.Read(n1, sizeof(int));

file.Read(n2, sizeof(int));

file.Read(n3, sizeof(int));

new1 = bpnn_internal_create(n1, n2, n3);

// printf("'%s' contains a %dx%dx%d network\n", filename, n1, n2, n3);

// printf("Reading input weights..."); // fflush(stdout);

memcnt = 0;

mem = (char *) malloc (((n1+1) * (n2+1) * sizeof(double)));

file.Read(mem, ((n1+1)*(n2+1))*sizeof(double));

for (i = 0; i = n1; i++) {

for (j = 0; j = n2; j++) {

//fastcopy((new1-input_weights[i][j]), mem[memcnt], sizeof(double));

fastcopy((new1-input_weights[i][j]), mem[memcnt], sizeof(double));

memcnt += sizeof(double);

}

}

free(mem);

// printf("Done\nReading hidden weights..."); //fflush(stdout);

memcnt = 0;

mem = (char *) malloc (((n2+1) * (n3+1) * sizeof(double)));

file.Read(mem, (n2+1) * (n3+1) * sizeof(double));

for (i = 0; i = n2; i++) {

for (j = 0; j = n3; j++) {

//fastcopy((new1-hidden_weights[i][j]), mem[memcnt], sizeof(double));

fastcopy((new1-hidden_weights[i][j]), mem[memcnt], sizeof(double));

memcnt += sizeof(double);

}

}

free(mem);

file.Close();

printf("Done\n"); //fflush(stdout);

bpnn_zero_weights(new1-input_prev_weights, n1, n2);

bpnn_zero_weights(new1-hidden_prev_weights, n2, n3);

return (new1);

}

void CAnnBP::CreateBP(int n_in, int n_hidden, int n_out)

{

net=bpnn_create(n_in,n_hidden,n_out);

}

void CAnnBP::FreeBP()

{

bpnn_free(net);

}

void CAnnBP::Train(double *input_unit,int input_num, double *target,int target_num, double *eo, double *eh)

{

for(int i=1;i=input_num;i++)

{

net-input_units[i]=input_unit[i-1];

}

for(int j=1;j=target_num;j++)

{

net-target[j]=target[j-1];

}

bpnn_train(net,eta1,momentum1,eo,eh);

}

void CAnnBP::Identify(double *input_unit,int input_num,double *target,int target_num)

{

for(int i=1;i=input_num;i++)

{

net-input_units[i]=input_unit[i-1];

}

bpnn_feedforward(net);

for(int j=1;j=target_num;j++)

{

target[j-1]=net-output_units[j];

}

}

void CAnnBP::Save(char *filename)

{

bpnn_save(net,filename);

}

void CAnnBP::Read(char *filename)

{

net=bpnn_read(filename);

}

void CAnnBP::SetBParm(double eta, double momentum)

{

eta1=eta;

momentum1=momentum;

}

void CAnnBP::Initialize(int seed)

{

bpnn_initialize(seed);

}

关于bp神经网络应用gui界面代码和bp神经网络 代码的介绍到此就结束了,不知道你从中找到你需要的信息了吗 ?如果你还想了解更多这方面的信息,记得收藏关注本站。

版权说明:如非注明,本站文章均为 AH站长 原创,转载请注明出处和附带本文链接;

相关推荐

- 05-09网页代码,网页代码快捷键

- 05-06单页网站的代码(完整的网页代码)[20240506更新]

- 05-06个人主页图片代码(个人主页图片代码怎么弄)[20240506更新]

- 05-06提取微信名片代码(微信名片信息提取)[20240506更新]

- 05-06php后台权限管理代码(php管理员权限)[20240506更新]

- 05-06付费观看代码php(付费观看代码)[20240506更新]

- 05-06在线html执行代码(html怎么运行)[20240506更新]

- 05-06源代码管理资源管理器(资源管理器运行代码)[20240506更新]

- 05-06代码源软件库(程序代码库)[20240506更新]

- 05-06点击弹出密码代码(点击弹出密码代码错误)[20240506更新]

取消回复欢迎 你 发表评论:

- 标签列表

- 最近发表

- 友情链接